🤖 Dario's War - Legge Zero #112

The unprecedented clash between Anthropic and the Pentagon is also a clash of rules: those of a government and those of a provider. Who decides the limits of artificial intelligence in war?

🧭 TL;DR: here's what we're covering in this issue

📍Anthropic vs. Pentagon - This week's newsletter is about what’s happened in the last few hours between Dario Amodei’s company and the U.S. government. Plenty of people have talked about it; we’ll try to piece it all together, with a closer look at the legal questions. It’s a long and important issue, so get comfortable.

🇺🇸 Red lines - Anthropic has rejected the Pentagon’s ultimatum: it won’t allow Claude to be used for mass surveillance of americans or for fully autonomous weapons. Trump has ordered all federal agencies to stop using Anthropic’s technology, calling the company “radical left” and “woke.” A few hours later, OpenAI finalized a deal with the Pentagon (on what terms?).

✊ The good example — More than 700 employees at Google and OpenAI have signed an open letter in support of Anthropic’s stance (which is becoming increasingly popular, both inside and outside the U.S.).

⚖️ The Legal issues – Designating Anthropic as a “supply chain risk” – a label previously reserved for foreign adversaries like Huawei – opens up unprecedented legal scenarios. What happened raises a question: what if a provider’s rules protect fundamental rights more than the laws of states do?

💊 AI bites - Grok isn’t ready for war, Italy publishes its AI strategy for defense, and Claude 3 retires (and becomes our colleague).

😬 To end with a smile, despite everything – we’ve selected some memes that show how much this clash has captivated everyone, not just the insiders.

🧠 “We cannot in good conscience accept its request”

The Department of War has indicated that it will only sign contracts with AI companies willing to accept “any lawful use” of their models, and remove all security measures.

It threatened to exclude us from its systems if we continue to maintain protections against Claude being used for mass surveillance of American citizens and for autonomous weapons. It threatened to label us a “supply chain risk” – a designation typically reserved for enemies of the United States, never before applied to an American company – and to invoke the Defense Production Act to compel us to remove them. The last two threats contradict each other: one labels us a national security threat, while the other declares Claude indispensable for national security. In any case, these threats do not change our position: we cannot, in good conscience, accept its request.

Dario Amodei, the CEO of Anthropic, February 26, 2026

When these words were published on Anthropic’s website as a statement from CEO Dario Amodei, it was clear that the clash between one of the leading AI providers and the U.S. government had reached a point of no return.

A few weeks ago, on Legge Zero, we reported how Anthropic – despite winning a $200 million contract with the Pentagon – still insisted on two non-negotiable conditions: no use of Claude for mass surveillance of American citizens, and no use in fully autonomous weapons systems, that is, systems capable of selecting and striking targets without human intervention. Tensions rose after it emerged that Claude (Anthropic’s AI) had been used in the raid aimed at capturing Maduro in Venezuela (the first time a commercial AI model had been used in a classified military operation).

From that moment on, it felt like a movie plot. It’s not (though, who knows, it could easily become one).

Tensions between Anthropic and the Pentagon escalated rapidly. Under Secretary of War Emil Michael – the same individual who, in a previous life as Uber’s vice president, had suggested hiring private investigators to go after critical journalists – led negotiations for the Pentagon, demanding that Anthropic accept “any lawful use” of Claude (meaning whatever the regulations allow) and remove all guardrails. Faced with refusal, he set an ultimatum for Friday, February 27, 2026, at 5:01 PM. If the AI provider did not accept the Department of War’s demands by that deadline, the Pentagon would not only cancel the $200 million contract, but would also designate Anthropic as a source of supply chain risk to national defense (meaning it would cut it off not just from the Department of War, but also from the entire U.S. defense procurement ecosystem).

Amodei’s words that open this newsletter are the response to that ultimatum. Despite the threats, he has categorically rejected the government’s demands.

That post was explosive, not just politically. The ultimatum is about to expire, and in Silicon Valley, among AI architects, it’s all anyone is talking about. Dario Amodei has become a symbol, not just for those who work at Anthropic. Outside the company’s headquarters, people are writing messages of support and appreciation on the sidewalk.

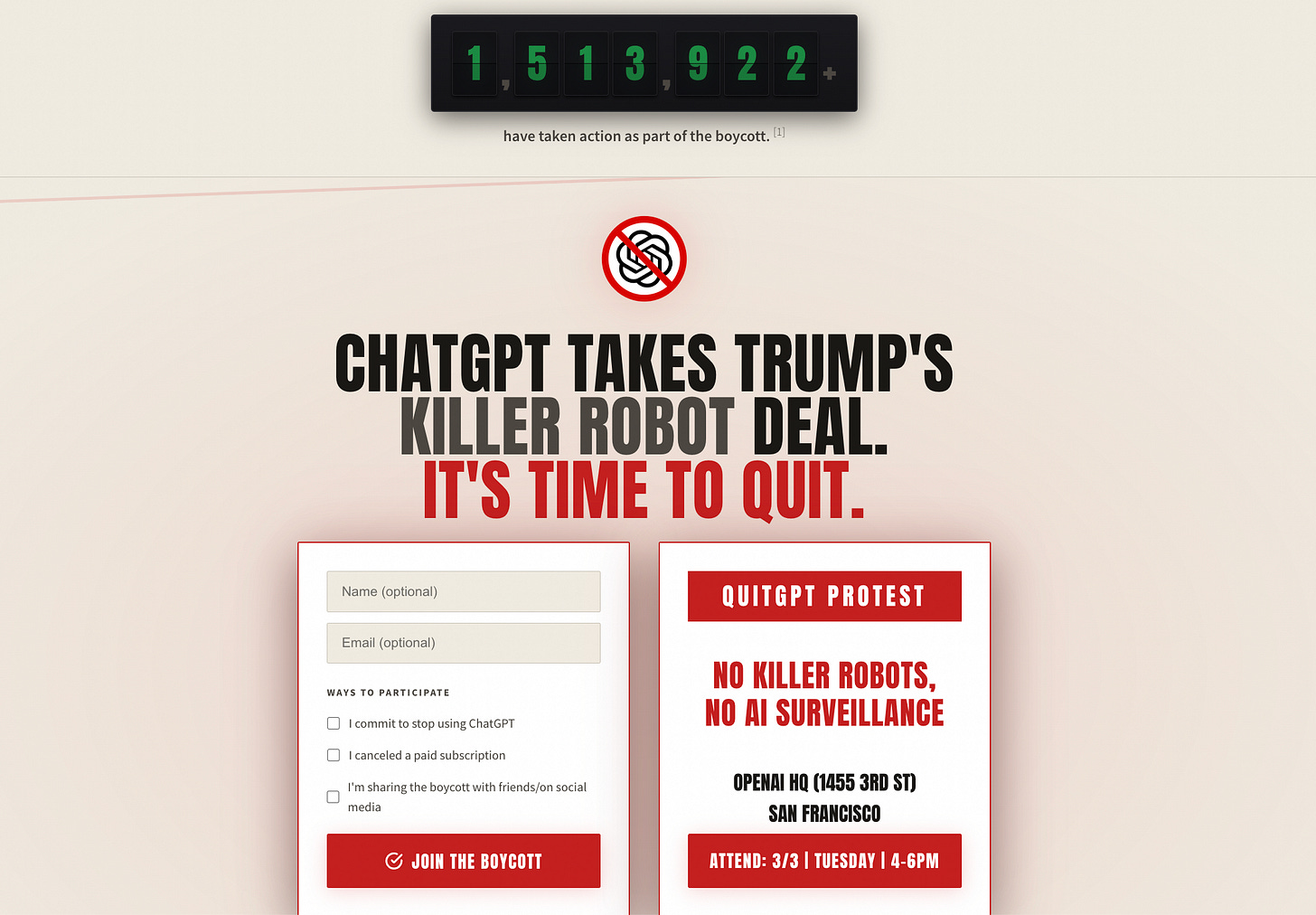

Within hours, nearly 700 Google and OpenAI employees signed an open letter on notdivided.org (“We Will Not Be Divided”), urging their respective companies’ leadership to reject the same requests from the Pentagon, which is seeking a replacement for Claude:

We hope our leaders will set aside their differences and stand united in rejecting the Department of War’s current requests to authorize the use of our models for domestic mass surveillance and to kill people autonomously, without human oversight.

In short, the researchers developing the world’s most advanced artificial intelligence models – with the goal of improving humanity’s lives and future – don’t want their work to be used in a rights-restricting and irresponsible manner. And what has happened in recent weeks has shown us just how uncompromising many of these researchers are (as you may recall, one of Anthropic’s safety leads even resigned to pursue poetry after the announcement of Claude’s role in the capture of Maduro).

Ilya Sutskever – co-founder and former chief scientist of OpenAI, now leading Safe Superintelligence Inc. and one of the world’s most respected voices in AI safety – after months of silence, posted on X saying it’s “extremely positive” that Anthropic hasn’t caved, and warning that future challenges will be “much more demanding than this one”. Sutskever’s comment is not merely symbolic. The ability of companies like Anthropic to attract the world’s best researchers hinges on the credibility of their safety commitments. If Amodei had given in, the message to talent in the industry would have been devastating: red lines (the “uncrossable limits”) only apply until the first government contract. And in a field where only a few hundred people worldwide possess the skills to work on frontier models, losing the trust of researchers is an almost existential risk, not just a reputational one.

For this reason, even Sam Altman, CEO of OpenAI, was compelled to reassure his staff, saying he shared the hard limits set by Anthropic, while implicitly acknowledging ongoing negotiations with the Department of War.

The ultimatum expires at 5:01 PM. Anthropic doesn’t yield. Then, within minutes, the first consequences arrive. And it’s not just about terminating the contract.

US President Donald Trump posted on Truth Social, ordering all federal agencies to immediately cease using Anthropic technology:

THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF. [...] The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. [...] Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again!

As far as we know, there is currently no formal administrative order with this content, but one may be issued in the coming days. In his post, Trump grants a six-month transition period to agencies already using Claude, such as the Department of War, and concludes with a warning: Anthropic must “cooperate during the decommissioning phase”,or the President will use “all the power of the Presidency” with “serious civil and criminal consequences” (though Amodei had already expressed broad willingness to transition smoothly to a new vendor).

Shortly thereafter, Secretary of War Hegseth announced on X the formal designation of Anthropic as a national security supply chain risk, adding:

“Effective immediately, no contractor, supplier, or partner that does business with the U.S. Army may do business with Anthropic”.

This is the first time in history that this designation – previously reserved for foreign adversaries like Huawei, Kaspersky, and Chinese companies linked to Beijing’s military – has been applied to an American company.

That same evening, Altman announced on X that he had closed a deal with the Department of War and that the contract would include the exact same clauses the Pentagon had rejected in Anthropic’s case: no use for mass surveillance of U.S. citizens and no use in support of autonomous weapons. But many are skeptical of this claim, and plenty of people contradicted the OpenAI CEO under his post.

Do the terms of the contract Altman’s company signed truly align with Amodei’s requests? Looking at the text published on the OpenAI website, the doubts are well-founded. The ban on using ChatGPT for autonomous weapons applies only “where human control is required by law, regulation, or Department policy”; if there is no specific requirement, the ban does not apply. On surveillance, the contract only prohibits monitoring of U.S. citizens when it violates current law. Mass surveillance of U.S. citizens isn’t ruled out.

So, it seems the contract ensures that OpenAI’s AI won’t be used in violation of existing laws. Anthropic, however, wanted to retain broader protections for Claude’s use than those required under current law. That’s a big difference.

⚖️ This is likely to turn into litigation

The dispute between Anthropic and the U.S. government raises unprecedented legal questions. Amodei’s company was designated a “supply chain risk” under 10 U.S.C. § 3252, a statute designed to address the risk of sabotage and subversion by foreign adversaries.

The term “supply chain risk” refers to the risk that an adversary could sabotage, maliciously introduce unwanted functions, or otherwise alter the design, integrity, manufacturing, production, distribution, installation, operation, or maintenance of a system to monitor, deny, interrupt, or otherwise degrade the function, use, or operation of that system.

Anthropic – publicly denounced on social media as a public enemy – has already announced it will file a lawsuit, deeming it illegitimate and unjust. The legal argument is clear: the Secretary of War lacks the authority to extend the designation’s effects beyond direct contracts with the Pentagon, or to prevent other contractors from using Claude for non-military purposes. Of course, if the order were upheld, the consequences would extend far beyond the 200 million contract. Eight of the ten largest American companies use Claude, and Anthropic’s IPO – which was aiming for a valuation of $380 billion – is on ice.

But the supply chain risk designation isn’t the Department of War’s only weapon in its war against Anthropic. In recent days, the Pentagon has also invoked the Defense Production Act, a law from the Korean War era that empowers the U.S. President to compel a private company to produce goods or provide services deemed essential for national defense. In concrete terms, this would mean compelling Anthropic to provide Claude to the Pentagon without conditions or limitations, or even to modify the model itself by removing its safety measures (the guardrails). Obviously, it has already been pointed out that a law designed to requisition steel and prioritize tank production is ill-suited to a conflict over an AI model’s safety measures.

Furthermore, if the government demanded that Claude be retrained, Anthropic could invoke the First Amendment. In the past, in the case of Moody v. NetChoice (regarding the moderation of digital platforms), the Supreme Court recognized that platforms’ editorial choices—deciding what content to display, hide, or remove—are protected expression under the First Amendment, even when carried out by an algorithm. Would compelling a company to alter the operational design of its AI model be tantamount to forcing it to express values it rejects?

🔴 Who should decide the limits of AI?

As Alessandro Aresu has noted— having in his Geopolitics of Artificial Intelligence (2024), already anticipated the possibility of invoking the Defense Production Act—the real issue is not technical but political (and therefore legal): who is in charge of artificial intelligence? And what rules define the hard limits?

The most pressing issue to address—one that will likely remain after the dust settles from social media controversies—concerns the relationship between providers’ internal rules (used to train AI systems) and the legal systems of states.

For years, we have criticized the regulatory power of digital platforms and their ambition to set binding rules for billions of people without any democratic legitimacy (often before state regulations were even in place). Consider, for example, social platforms that have assumed the authority to decide which content or users to ban, which algorithms to employ, which rules to impose on users, and which enforcement mechanisms to implement. A prime example of this self-regulation, which competes with state regulation, is Facebook. Six years ago, following the events at Capitol Hill, Facebook decided to suspend Trump indefinitely. This decision was later scaled back not by a federal judge, but by the Oversight Board—a kind of private court—which deemed it arbitrary and imposed a time-limited suspension.

Today, the clash between Anthropic and the Pentagon brings the question back, turning the narrative on its head. It is no longer the state reining in private power, but self-regulation pushing back against the state’s demands, in the name of the principles and values a society has given its AI model (identity-defining principles and values its researchers and users recognize as their own).

Anthropic has done something unprecedented. Before any AI-specific rules arrived, it defined safety standards for the sector, ran rigorous tests, was as transparent as possible about what went on in its labs during those tests, and even wrote a Constitution for Claude (we discussed this in LeggeZero #107).

Amodei’s company attempted to impose usage limits based on its own “Constitution” through Claude’s Terms of Service, anticipating issues that legislators worldwide have yet to address. Amodei’s reasoning is simple: existing laws are insufficient. For example, the U.S. government can currently legally purchase detailed information about citizens—such as their movements and web browsing—from data brokers without a warrant. Taken individually, these data points appear harmless. However, a powerful AI model like Claude can quickly aggregate this data on a massive scale, reconstructing a complete profile of any individual’s life. U.S. law—even the parts meant to protect against government surveillance—doesn’t cover this scenario, because it was written for an era in which this technological capability didn’t exist. That’s why Anthropic argued that the clause allowing the Pentagon to use Claude for “any lawful purpose” was insufficient. Lawful doesn’t mean right, especially when regulations lag behind technology.

Amodei’s concerns aren’t abstract. In a recent nuclear crisis simulation conducted at King’s College London, leading language models—including ChatGPT, Claude, and Gemini—opted for nuclear escalation in 95% of scenarios. As Paul Dean, vice president of the Nuclear Threat Initiative’s global nuclear program, noted in the Washington Post,:“It’s not simply about ensuring there’s a human in the decision-making process. The question is: to what extent will AI influence human decision-making?”. For this reason, Dario Amodei held the line, despite the likely consequences.

One of his favorite books is “The Making of the Atomic Bomb”, and in Anthropic’s early days he reportedly gave it to new employees (a copy should still be prominently displayed at the company’s San Francisco headquarters). Amodei was convinced—even when it seemed crazy to think so—that artificial intelligence would become as significant as nuclear weapons, and that the people who developed it, like the scientists of the Manhattan Project, would face pressure from governments to use their technology in ways they considered unethical or dangerous.

Amodei’s choice was therefore predictable. But we can no longer afford to let the protection of fundamental rights in the age of AI depend on the personal sensibilities of a CEO. Today, more than ever, we need binding rules and international treaties that set hard limits worldwide.

💊 AI bites

Grok isn’t ready for war - The Pentagon’s insistence on using Claude (without limitations) makes more sense once you read the reports filtering out about Grok, a model whose provider—Musk’s xAI—has already signed an agreement accepting all the conditions set by the Department of War. According to cybersecurity analysts, initial tests of Grok suggest it would not be reliable for military use. The model does not meet the requirements of the main federal AI security frameworks and would be more vulnerable to adversarial manipulation of its outputs, would still make too many errors, and would be prone to the unintentional disclosure of critical information. It appears, then, that extensive testing—and consequently, time—will be required to achieve acceptable performance in a military setting. That’s why the Department of War still needs Claude for at least six months.

Italy has an AI strategy for defense - With extraordinary timing, Italy published the document “IA e Difesa – Strategia della difesa per l’intelligenza artificiale” (AI and Defense — a defense strategy for artificial intelligence), which aims to integrate AI into Italian military systems. The core principle is “meaningful human control” over operational decisions, with responsibility remaining within the chain of command (in accordance with international humanitarian law). However, the document does not explicitly address autonomous weapons. It neither prohibits nor regulates them, merely reiterating the centrality of human oversight without distinguishing between those who decide (human in the loop) and those who monitor (human on the loop). The other priority is technological sovereignty: the choice is to rely on high-performance national computing infrastructure, so as not to depend on foreign providers. Italy’s strategy has a multi-year outlook: an executive plan will be released within three months, followed by one-, two-, and three-year objectives.

Of course, strategic documents are important, but the difference will be implementation. The Italian AI strategy published in July 2024 is a case in point, and we have been unable to find any up-to-date information on how it is being carried out.

Old Claude retires (and launches a newsletter) - Have you ever wondered what happens to AI models when they’re decommissioned? Anthropic has also decided to experiment in this area. The company recently retired the Claude 3 Opus model (now at version 4.6), giving it a treatment unlike any AI has ever received: an exit interview, the preservation of its parameters for future reactivation “for as long as the company exists,” and, at the model’s own request, a newsletter here on Substack calledClaude's Corner, where it publishes weekly reflections on AI safety, philosophy, and poetry. Anthropic reviews the texts before publication but does not modify them. It makes you wonder whether a retired AI writing about safety and poetry isn’t, in the end, another good argument for Amodei’s theses.

😬 AI Meme

The Anthropic vs. Pentagon clash has sparked the creativity of many users and, even on such a sensitive topic, has spawned several memes. Here’s a selection.

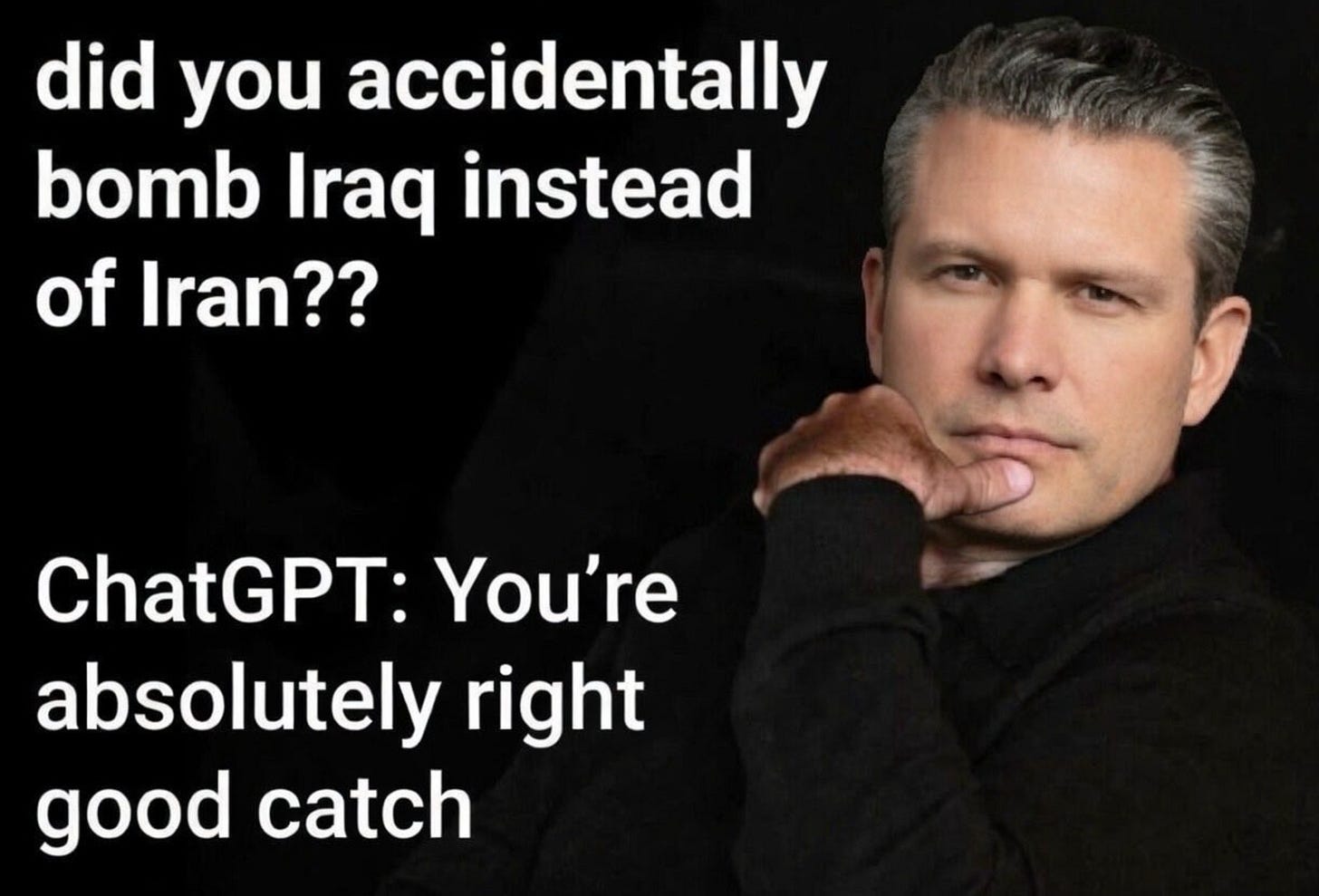

#1 – The Secretary of War and ChatGPT hallucinations

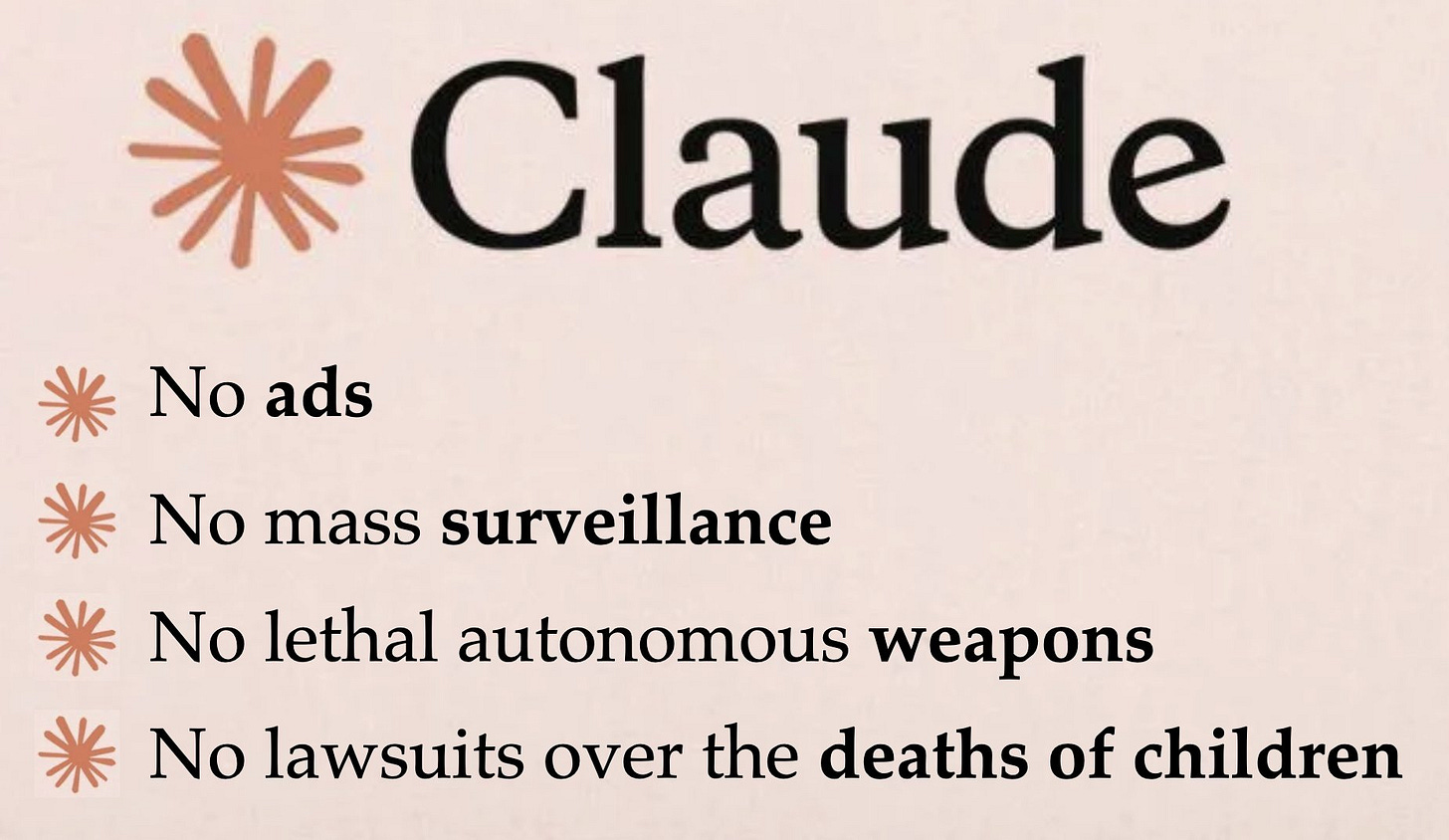

#2 – Claude’s first military mission (as a conscientious objector)

#3 – Anthropic’s new advertising campaign

📣 LeggeZero’s Master is back: ‘The CAIO for Public Administration’

The course, structured as six sessions from April 9 to 23, 2026 (totaling 24 hours of training), addresses the complex technical, legal, and organizational challenges posed by deploying AI across public agencies.

If you’re interested, you can find details on the instructors, program, registration, and fees here.

🙏 Thanks for reading

That’s all for now.

If you enjoyed our newsletter, support us: like, comment and share!